Lanet CLICK company received the Google Premier Partner 2026 status

Digital agency Lanet CLICK has been awarded Google Premier Partner 2026 status – the highest level of partnership in the […]

Today, artificial intelligence is an intermediary between the user and the site. Chatbots, AI search, and generative answers use the content of web resources to generate results for people. That is why new tools for interacting with AI are emerging, one of which is the LLMS.txt file. This is a special text document that helps large language models understand the structure of the site and find the most important content faster.

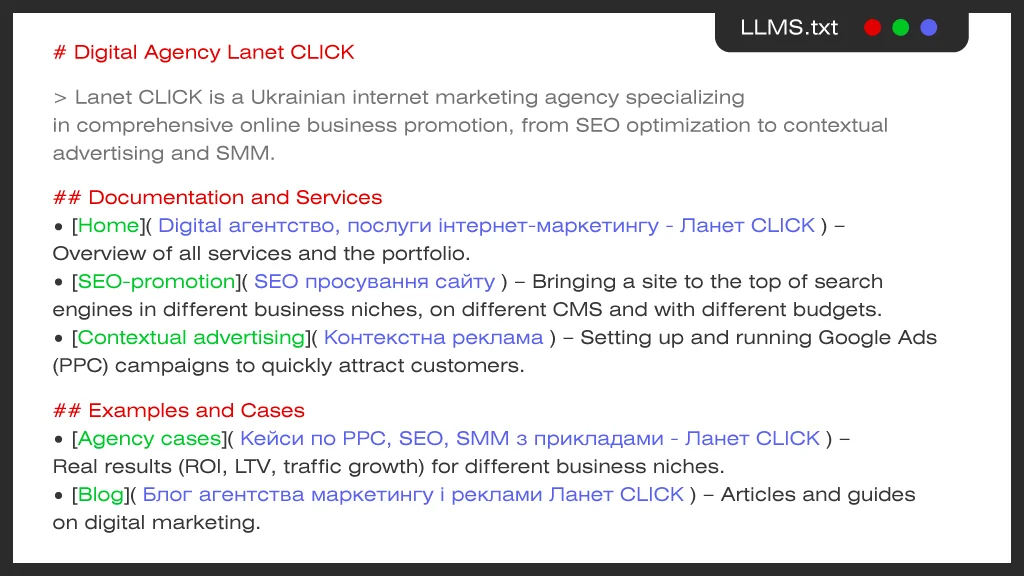

LLMS.txt is a text file placed in the root directory of a website to provide instructions for Large Language Models (LLMs) that work with text, such as chatbots or AI search. The file is usually created in Markdown, a simplified markup language that is easy for both humans and algorithms to read.

LLMS.txt is most often used to include:

This is a new standard that is just starting to be implemented and is becoming part of the approach to optimizing a website for LLM. The main task of LLMS.txt is to show the AI which pages of the website are most important and how to interpret them correctly.

LLMS.txt is designed to help the AI:

The LLMS.txt file works as a set of rules or prompts for AI bots and artificial intelligence systems. In it, the site owner describes which pages should be analyzed and which ones should be skipped. Thanks to this, the AI receives a structured resource map and finds relevant content faster.

In fact, the structure of the LLMS.txt file forms a clear list of priority materials, which helps models interpret information more accurately. This approach is increasingly used in site optimization for ChatGPT and other AI systems.

When a bot or AI agent visits the site, it can refer to LLMS.txt and read the specified instructions. Next, the algorithm:

As a result, the AI gets a clearer understanding of the site’s content, which helps to form more accurate answers and use relevant sources of information.

The functions of LLMS.txt, robots.txt and sitemap.xml are significantly different. Robots.txt defines which pages are allowed or prohibited to crawl by search bots, while sitemap.xml contains a list of pages on the site for indexing. Instead, LLMS.txt does not restrict access or create a map of all URLs, but helps AI systems understand which content is prioritized. It is a content management tool for AI, which tells models what materials to pay attention to when analyzing a site.

| File | Who is it for | Main purpose | Main difference |

| robots.txt | Search bots (Google, Bing) | Access control: specifies which pages of the site to index and which to block | Controls the behavior of search robots, does not affect AI |

| sitemap.xml | Search engines | Navigation: shows a complete list of pages for quick indexing | Sitemap for efficient indexing, contains no blocking rules |

| llms.txt | LLM models and AI agents (ChatGPT, Claude, Perplexity) | Semantic context: provides concise site content specifically for AI | Designed specifically to guide AI systems, not search engines |

Each element in LLMS.txt has a clear purpose and simplifies the analysis of pages by AI crawlers – automatic bots that collect data for large language models.

# – project name. This is a first-level heading. It indicates the name of the site or project and briefly describes its purpose. Thanks to this, the AI immediately understands what resource it is about.

> – short description. The > symbol is used for quotes or explanatory blocks. This is usually where a short description of the site or its content is placed to give the AI general context.

## – sections. The double sign ## creates subsections. They group pages by content, such as main materials, documentation, guides or reference content.

Each entry consists of three parts:

The ## Additionally section is reserved. It is used to place secondary or auxiliary content. If the model is working with limited context or data, it can skip this section and focus on the main pages. This helps the AI find the most valuable information faster.

Creating an LLMS.txt file for AI is a fairly simple process, but it requires attention to detail. The main thing is to correctly identify the key pages of the site and arrange them in a clear structure. Basic steps:

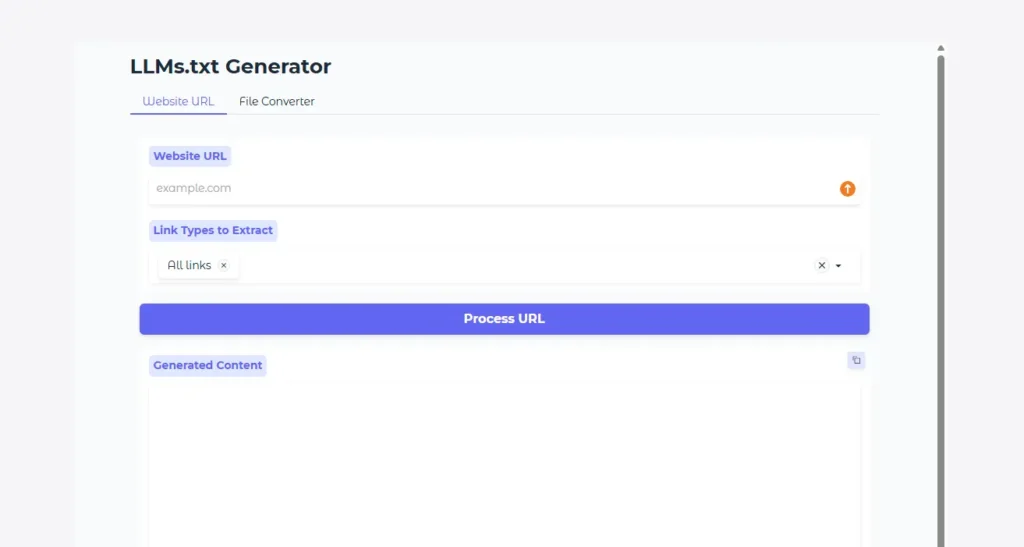

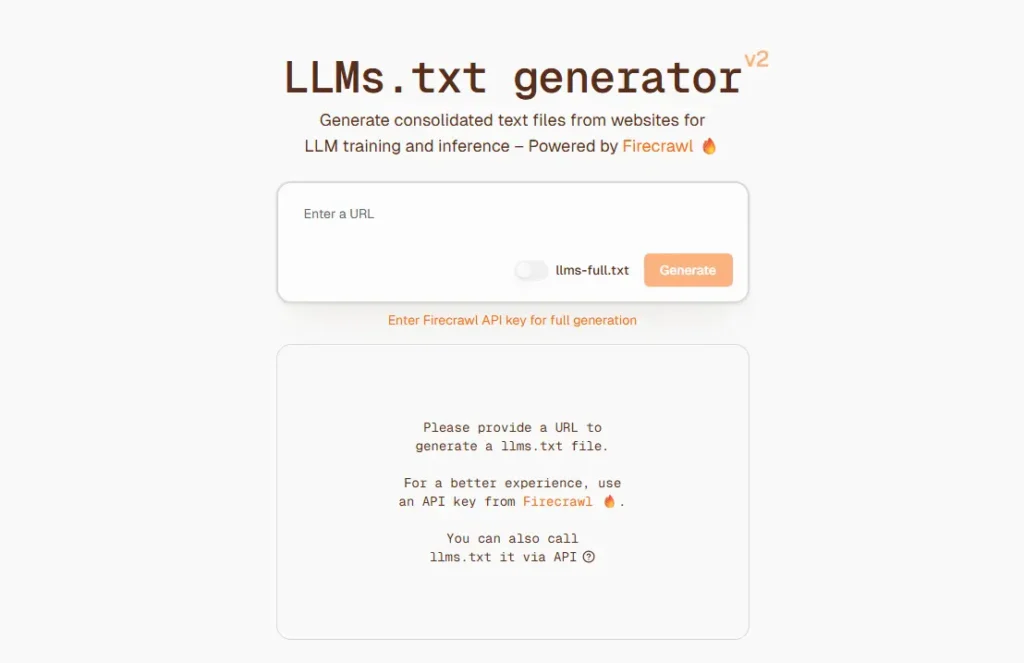

If you are unable to create the file yourself or need to do it faster, it is worth ordering the GEO optimization of the site from professionals. As part of such a service, specialists can help create and structure the LLMS.txt file. You can also use special online tools.

Some services automatically generate LLMS.txt based on the structure of the site. Among the popular tools are:

The LLMS.txt file helps AI systems find relevant information on a site faster and interpret it correctly when generating answers. This can help to more accurately reflect data in chatbot and AI search results. A structured file also increases the chances of getting AI traffic when a user visits your site via an AI-generated response.

The LLMS.txt file helps to:

Although the LLMS.txt standard is still in its infancy, early implementation can provide a strategic advantage. The file helps structure information about your resource and can make it easier for AI crawlers to navigate your site structure. In the context of website promotion, this means adapting to the era of generative search, where AI generates answers based on specific sources. Companies that start optimizing earlier can gain better visibility and a competitive advantage in the AI traffic ecosystem.

The LLMS.txt file is a new tool for interacting with AI systems. It helps structure important content and tells AI models which pages to use when generating answers. Although this format is not yet an official web standard, its implementation is seen as an important element of optimizing a site for AI and preparing for new search formats.

In the long term, LLMS.txt can become part of a broader strategy for working with the AI ecosystem. Using such tools together with SEO, technical optimization, and content marketing forms a comprehensive website promotion that helps brands remain visible in the era of generative search and AI traffic.

Digital agency Lanet CLICK has been awarded Google Premier Partner 2026 status – the highest level of partnership in the […]

In 2026, trending hashtags on TikTok remain an effective tool for organic promotion, helping brands and content creators quickly reach […]

Every month, thousands of companies spend millions on advertising in Google Ads. However, a significant part of this money does […]

A good strategy, perfectly selected digital tools, and their effective application will allow the business to increase profits, grow the customer base, and form recognition and loyalty. Do you want something like that? Contact us.

You have taken the first step towards effective online marketing. Our managers will contact you and consult you soon.